projection (linear algebra)

Projection

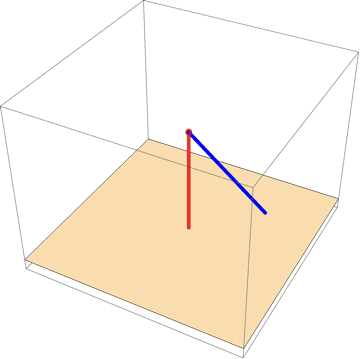

The imagine above shows orthogonal projection (red) and projection (blue).

Projection is a linear transformation \[P\] from a vector space to itself such that \[P\circ P=P\], i.e. whenever \[P\] is applied twice to any vector, it gives the same result as if it were applied once.

Intuition (for orthogonal projection)

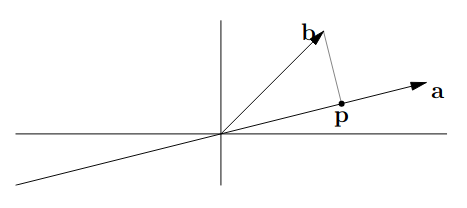

Imagine we have two vectors \[\mathbf{a}\] and \[\mathbf{b}\] and we are tasked to find the point on line \[\mathbf{a}\] that's closest to \[\mathbf{b}\]. We will name this point \[\mathbf{p}\], then it follows that the intersection formed by a line through \[\mathbf{b}\] is orthogonal (perpendicular) to \[\mathbf{a}\].

If we think of \[\mathbf{p}\] as an approximation of \[\mathbf{b}\], we can then define \[\mathbf{e}=\mathbf{b}-\mathbf{p}\] as the error in that approximation (the vertical distance from point \[\mathbf{b}\] to \[\mathbf{p}\]). \[\mathbf{e}\] is also sometimes known as the vector rejection of \[\mathbf{a}\] to \[\mathbf{b}\], denoted as \[\text{oproj}_{\mathbf{b}}\mathbf{a}\].

Since \[\mathbf{p}\] lies on \[\mathbf{a}\], then \[\mathbf{p}=x \mathbf{a}\] for some scalar \[x\]. Based on the definition of dot product, if two vectors are orthogonal, then the dot product is zero, in this case \[\mathbf{e}=\mathbf{b}-x\mathbf{a}\] (after substituting \[\mathbf{p}\]) is orthogonal to \[\mathbf{a}\]. Therefore using the fact that \[\mathbf{a}\cdot \mathbf{b}=\mathbf{a}^{T}\mathbf{b}\],

and \[\mathbf{p}=x\mathbf{a}=\mathbf{a}\frac{\mathbf{a}^{T}\mathbf{b}}{\mathbf{a}^{T}\mathbf{a}}\].

Now, we would like to write this projection in terms of a projection matrix (essentially a transformation matrix) such that \[\mathbf{p}=P\mathbf{b}\] where \[P\] is the projection matrix. Since \[\mathbf{p}=x\mathbf{a}\], \[x\mathbf{a}=P\mathbf{b}\], though it is to note that \[\mathbf{a}\ne \mathbf{b}\] as \[x\] is a scalar while \[P\] is a matrix. This statement tells us that when we project \[\mathbf{b}\] with the matrix \[P\], it will be a scalar multiple of \[\mathbf{a}\].

To find the projection matrix, we know \[\mathbf{p}=\frac{\mathbf{a}^{T}\mathbf{b}}{\mathbf{a}^{T}\mathbf{a}}\mathbf{a}=\frac{\mathbf{a}\mathbf{a}^{T}\mathbf{b}}{\mathbf{a}^{T}\mathbf{a}}\implies \frac{\mathbf{a}\mathbf{a}^{T}}{\mathbf{a}^{T}\mathbf{a}}\mathbf{b}\]. The reason why \[\mathbf{a}\] is added to the left, i.e. \[\mathbf{a}\mathbf{a}^{T}\], is that \[\mathbf{a}^{T}\mathbf{b}\] is just a \[1\times 1\] matrix (essentially a scalar), so to preserve that order we add \[\mathbf{a}\] to the left. Then, by comparison with \[\mathbf{p}=P\mathbf{b}\], it is shown that \[P=\frac{\mathbf{a}\mathbf{a}^{T}}{\mathbf{a}^{T}\mathbf{a}}\], which tells us that \[P\] is a square \[n\times n\] matrix (depending on the dimensions for \[\mathbf{a}\]). With this understanding, if we apply \[P\] multiple times, i.e. \[P(P\mathbf{b})=P\mathbf{b}\], the result stays the same as \[\mathbf{p}\] is already on line \[\mathbf{a}\] so there's nothing more to project. Therefore, we can say that projection matrices have the property \[P^{2}=P\].

Additionally, an orthogonal projection matrix \[P\] has the property \[P^{T}=P\] as (see matrix transpose for \[\left( \mathbf{a}\mathbf{a}^{T} \right)=\left( \mathbf{a}^{T} \right)^{T}\mathbf{a}^{T}\]),

Definition

A projection on a vector space \[V\] is a linear operator \[P:V\to V\] such that \[P^{2}=P\].

A square matrix \[P\] is known as a projection matrix if it is equal to its square, i.e. if \[P^{2}=P\]. Additionally, if \[P^{2}=P=P^{T}\] then \[P\] is a orthogonal projection matrix.

Orthogonal projection

An orthogonal projection is a linear transformation that maps every vector in a vector space onto a subspace in such a way that the error between the original vector and its projection is always perpendicular to the subspace. Formally, if \[P\] is a projection onto a subspace \[S\] of a vector space \[V\], then for any vector \[\mathbf{b}\in V\] the projected vector, \[\mathbf{p}=P\mathbf{b}\] satisfies \[\mathbf{e}=\mathbf{b}-\mathbf{p}\] and \[\mathbf{e}\] is orthogonal to \[S\].

Vector projection

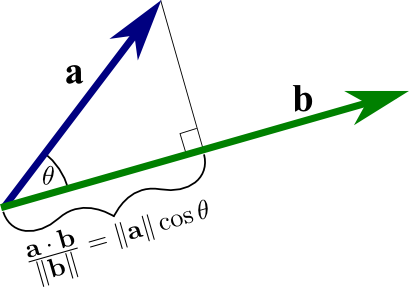

The equation presented in the figure is the formula for the magnitude of the vector projection, \[\left\lVert \text{proj}_{\mathbf{b}}\mathbf{a} \right\rVert=\frac{\mathbf{a}\cdot \mathbf{b}}{\left\lVert \mathbf{b} \right\rVert}=\frac{\left\lVert \mathbf{a} \right\rVert \left\lVert \mathbf{b} \right\rVert\cos\theta}{\left\lVert \mathbf{b} \right\rVert}=\left\lVert \mathbf{a} \right\rVert\cos\theta\].

The vector projection is a special case of the orthogonal projection that focuses on projecting one vector onto another nonzero vector. In other words, the vector projection of a vector \[\mathbf{a}\] onto a nonzero vector \[\mathbf{b}\] is simply the orthogonal projection of \[\mathbf{a}\] onto a straight line spanned by \[\mathbf{b}\].

Formally, its formula is given by multiplying the magnitude of the projection, i.e. the segment of \[\mathbf{b}\] in brackets (or the dot product \[\mathbf{a}\cdot \hat{\mathbf{b}}\]) by the direction of \[\mathbf{b}\] (\[\hat{\mathbf{b}}\]). Mathematically, we write it as \[\text{proj}_{\mathbf{b}}\mathbf{a}=\left( \mathbf{a}\cdot \hat{\mathbf{b}} \right)\hat{\mathbf{b}}\]. Then,

Note that \[\text{proj}_{\mathbf{b}}\mathbf{a}\] is a in fact a vector, not a scalar (unlike the result of a dot product).