dot product

Dot product

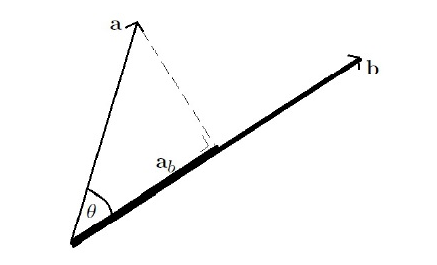

We'll start with the most fundamental definition, the dot product of \[\mathbf{a}\] and \[\mathbf{b}\] is the product of \[\left\lVert \mathbf{b} \right\rVert\] and \[\left\lVert \mathbf{a}_{b} \right\rVert\] where \[\mathbf{a}_{b}\] is the projection of \[\mathbf{a}\] onto \[\mathbf{b}\]. It is used when you want the magnitude of the projection of one vector onto another.

Intuitive understanding

Dot products are very geometric objects. They actually encode relative information about vectors, specifically they tell us "how much" one vector is in the direction of another. For instance, if you pulled a box 10 meters at an inclined angle, there is a horizontal component and a vertical component to your force vector. So the dot product in this case would give you the amount of force going in the direction of the displacement, or in the direction that the box moved. This is important because work is defined to be force multiplied by displacement, but the force here is defined to be the force in the direction of the displacement.

To demonstrate dot products, say we have two vectors, \[(3,0)\] and \[(0,4)\], then the dot product, \[(3,0)\cdot(0,4)=0\] would tell us that both of those vectors have no component in the same direction, i.e. they are orthogonal to each other.

Consider a man pulling an object along the ground, logically the work done by the man is just the projection of the force vector \[\vec{a}\] onto \[\vec{b}\] times the distance the object travels, \[\vec{b}\]. Thus, work, being calculated as \[W=Fs=(\left\lVert \vec{a} \right\rVert\cdot \cos t)\cdot \left\lVert \vec{b} \right\rVert=\vec{a}\cdot \vec{b}\].

Coordinate definition

The dot product of two vectors \[\mathbf{a}=\left( a_{1},a_{2},\dots,a_{n} \right)\] and \[\mathbf{b}=(b_{1},b_{2},\dots,b_{n})\] where \[n\] is the dimension of the vector space, specified with respect to an orthonormal basis, is defined as \[\mathbf{a}\cdot \mathbf{b}=\sum_{i=1}^{n}a_{i}b_{i}=a_{1}b_{1}+a_{2}b_{2}+\cdots+a_{n}b_{n}\]. From there, we can say that the dot product can also be written as a matrix product, \[\mathbf{a}\cdot \mathbf{b}=\mathbf{a}^{T}\mathbf{b}\]. It is important to rememeber that the dot product \[\mathbf{a}\cdot \mathbf{b}\] is a scalar, not a vector.

The phrase "specified with respect to an orthonormal basis" essentially tells us that we have chosen a particular set of standard basis vectors, e.g. \[\mathbf{e}_{1},\mathbf{e}_{2},\mathbf{e}_{3},\dots,\mathbf{e}_{n}\], that are all orthonormal, i.e. \[\mathbf{e}_{i}\] has length 1 and any two standard basis vectors are perpendicular to each other, or in simple words our "standard" Euclidean space. By specifying our vectors in terms of those orthonormal basis, we are in fact defining \[\mathbf{a}=a_{1}\mathbf{e}_{1}+a_{2}\mathbf{e}_{2}+\dots+a_{n}\mathbf{e}_{n}\].

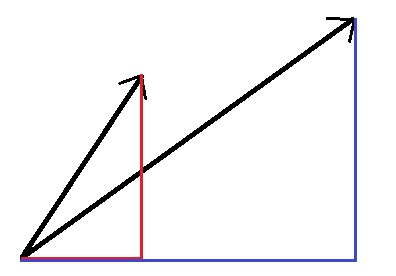

We will now demonstrate geometrically why the equation is formed in this manner. Assume we have two vectors, \[\mathbf{a}\] and \[\mathbf{b}\] which are decomposed into its horizontal and vertical components, \[\mathbf{a}=a_{x}\mathbf{i}+a_{y}\mathbf{j}\] and \[\mathbf{b}=b_{x}\mathbf{i}+b_{y}\mathbf{j}\].

Then,

We also know based on the definition the dot product of perpendicular vectors is zero and the dot product of parallel vectors is the product of their lengths, i.e. \[\mathbf{i}\cdot \mathbf{i}=\mathbf{j}\cdot \mathbf{j}=1\], and \[\mathbf{i}\cdot \mathbf{j}=0\]. Substituting this result into our equation we get \[\mathbf{a}\cdot \mathbf{b}=a_{x}b_{x}+a_{y}b_{y}\].

As an example, the dot product of \[\begin{pmatrix} 1\\3\\-5 \end{pmatrix}\cdot \begin{pmatrix} 4\\-2\\-1 \end{pmatrix}\] is \[(1\times 4)+(3\times -2)+(-5\times -1)=3\].

Geometric definition

In Euclidean space, a Euclidean vector can be pictured as an arrow, its magnitude as length and its direction is the direction in which the arrow is pointing.

Now with direction, the dot product of two Euclidean vectors \[\mathbf{a}\] and \[\mathbf{b}\] is defined by \[\mathbf{a}\cdot \mathbf{b}=\left\lVert \mathbf{a} \right\rVert \left\lVert \mathbf{b} \right\rVert\cos\theta\]. It is also to note that as a consequence of this definition, if \[\mathbf{a}\] and \[\mathbf{b}\] are orthogonal, i.e. their angle is \[90^{\circ}\], \[\mathbf{a}\cdot \mathbf{b}=\left\lVert \mathbf{a} \right\rVert \left\lVert \mathbf{b} \right\rVert\cos 90^{\circ}=0\].

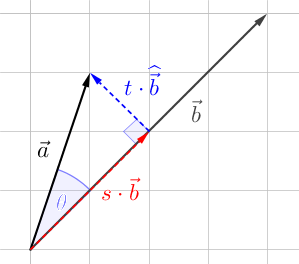

From this diagram above we can infer that the vector \[\mathbf{a}\] is can be represented as \[\begin{pmatrix} a_{1}\\a_{2} \end{pmatrix}=s\cdot \mathbf{b}+t\cdot \widehat{\mathbf{b}}\], where \[\widehat{\mathbf{b}}=\mathbf{b}^{\perp}=\begin{pmatrix} -b_{2}\\b_{1} \end{pmatrix}\]. The perpendicular vector of \[\mathbf{b}\], \[\widehat{\mathbf{b}}\] should not be confused with \[\hat{\mathbf{b}}\], which represents the unit vector of \[\mathbf{b}\].

Continuing on, \[\begin{pmatrix} a_{1}\\a_{2} \end{pmatrix}=s\cdot \mathbf{b}+t\cdot \widehat{\mathbf{b}}=s\cdot \begin{pmatrix} b_{1}\\b_{2} \end{pmatrix}+t\cdot \begin{pmatrix} -b_{2}\\b_{1} \end{pmatrix}=\begin{pmatrix} sb_{1}-tb_{2}\\sb_{2}+tb_{1} \end{pmatrix}\], therefore using the coordinate definition,

Equivalence of both definitions

We will now prove that if the product is defined at be \[\mathbf{a}\cdot \mathbf{b}=\sum_{i=1}^{n}a_{i}b_{i}\], then it is also true that \[\mathbf{a}\cdot \mathbf{b}=\left\lVert \mathbf{a} \right\rVert\left\lVert \mathbf{b} \right\rVert\cos\theta\], i.e. these two definitions describe the same operation.

We start by multiplying a vector times itself (assuming it's three-dimensional), \[\mathbf{a}\cdot \mathbf{a}=\left\lVert \mathbf{a} \right\rVert^{2}\cos(0)=a_{1}^{2}+a_{2}^{2}+a_{3}^{2}=\left\lVert \mathbf{a} \right\rVert^{2}\], which tells us that if \[\mathbf{a}\] and \[\mathbf{b}\] are the same vector then the two definitions are equivalent.

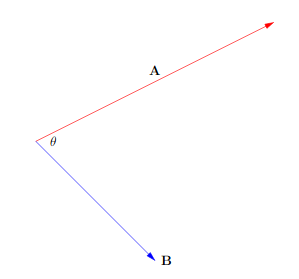

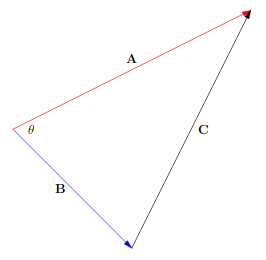

If \[\mathbf{a}\] and \[\mathbf{b}\] are different vectors, we can use the law of cosines to show the equivalence of both definitions.

In terms of vectors, the two known sides of our triangle are formed by \[\mathbf{a}\] and \[\mathbf{b}\], with the third side described by \[\mathbf{c}=-\mathbf{b}+\mathbf{a}\]. The law of cosines also tells us that geometrically, \[\left\lVert \mathbf{c} \right\rVert^{2}=\left\lVert \mathbf{a} \right\rVert^{2}+\left\lVert \mathbf{b} \right\rVert^{2}-2 \left\lVert \mathbf{a} \right\rVert \left\lVert \mathbf{b} \right\rVert\cos\theta\]. Then, by the coordinate definition,

By comparison, it is shown that \[\mathbf{a}\cdot \mathbf{b}=\left\lVert \mathbf{a} \right\rVert \left\lVert \mathbf{b} \right\rVert\cos\theta\]. Now, we will also prove the reason why we can expand \[(\mathbf{a}-\mathbf{b})\cdot(\mathbf{a}-\mathbf{b})\] into \[\mathbf{a}\cdot \mathbf{a}-\mathbf{a}\cdot \mathbf{b}-\mathbf{b}\cdot \mathbf{a}+\mathbf{b}\cdot \mathbf{b}\]. First, since \[\mathbf{a}\] and \[\mathbf{b}\] are vectors, \[\mathbf{a}-\mathbf{b}=(a_{1}-b_{1},a_{2}-b_{2},\dots,a_{n}-b_{n})\]. By the coordinate definition, \[(\mathbf{a}-\mathbf{b})\cdot(\mathbf{a}-\mathbf{b})=\sum_{i=1}^{n}(a_{i}-b_{i})(a_{i}-b_{i})\].

Properties

Let \[\mathbf{a}\], \[\mathbf{b}\], \[\mathbf{c}\] and \[\mathbf{d}\] be real vectors and \[\alpha\], \[\beta\], \[\gamma\] and \[\delta\] be scalars.

- \[\mathbf{a}\cdot \mathbf{b}=\mathbf{b}\cdot \mathbf{a}\]

- \[(\alpha \mathbf{a}+\beta \mathbf{b})\cdot (\gamma \mathbf{c}+\delta \mathbf{d})=\alpha\gamma(\mathbf{a}\cdot \mathbf{c})+\alpha\delta(\mathbf{a}\cdot \mathbf{d})+\beta\gamma(\mathbf{b}\cdot \mathbf{c})+\beta\delta(\mathbf{b}\cdot\mathbf{d})\]