first fundamental theorem of calculus

First fundamental theorem of calculus

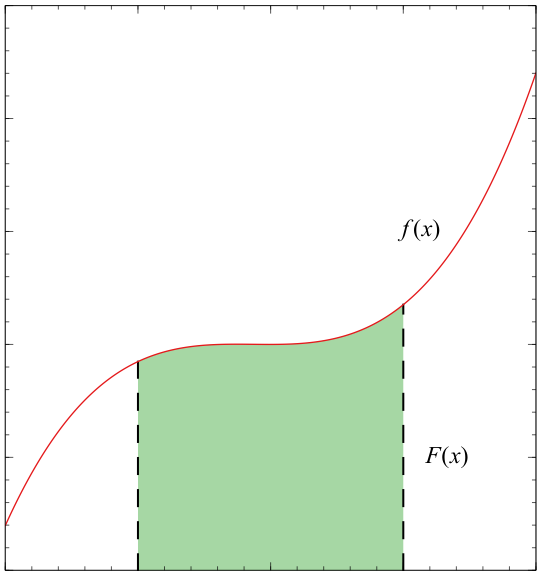

Let \[f\] be a continuous real-valued function defined on a closed interval \[[a,b]\], or \[\left\{ x\in\mathbb{R}|a\le x\le b \right\}\]. Let \[F\] be the function defined, for all \[x\] in \[[a,b]\], by \[F(x)=\int_{a}^{x}f(t)\,dt\]. Then \[F\] is uniformly continuous on \[[a,b]\] and differentiable on the open interval \[(a,b)\] (has a derivative at every point between \[a\] and \[b\], not including the endpoints) and \[F^{\prime}(x)=\frac{d}{dx}\int_{a}^{x}f(t)\,dt=f(x)\] for all \[x\] in \[(a,b)\]. Note: the graph for \[F\] is completely unrelated to \[f\].

The first fundamental theorem of calculus then defines that \[F\] is an antiderivative of \[f\], or in mathematical notation, \[F^{\prime}(x)=f(x)\].

Intuitively, we can understand this in plain words. \[F(b)=\int_{a}^{b}f(x)\,dx\] "records" the area under the curve from \[x=a\] to \[x=b\]. If we move the the boundary \[b\] just a tiny bit to the right, specifically to \[x=b+\Delta b\], the extra tiny bit of area we just created would be \[F(b+\Delta b)-F(b)\], or \[\approx f(b)\cdot\Delta b\].

If we then write it as \[\lim_{\Delta b\to0}\frac{F(b+\Delta b)-F(b)}{\Delta b}=\frac{f(b)\cdot\Delta b}{\Delta b}=f(b)\], it becomes awfully similar to the definition of derivatives. Therefore, \[\lim_{\Delta b\to0}\frac{F(b+\Delta b)-f(b)}{\Delta b}=\frac{d}{db}F(b)=f(b)\], showing that \[\frac{d}{dx}\int_{a}^{x}f(t)\,dt=f(x)\].

Proof of \[F^{\prime}(x)=f(x)\]

First, since \[f\] is continuous, it's integrable, that is \[F(x)=\int_{a}^{x}f(t)\,dt\] exists. There are a few clarifications to be made.

- \[t\] represents a dummy variable, and can be replaced with any symbol, such as \[s\] or \[\tau\]. The reason why \[x\] isn't used is because it is already used as the upper limit for integration

- \[F(x)\] is defined as a function that computes the integral of \[f(t)\] from \[a\] to \[x\] for every \[x\] in \[[a,b]\]. As \[x\] varies, the interval \[[a,x]\] changes, altering the accumulated area thus making \[F(x)\] a function of \[x\]

An example that can be made would be to define \[f(x)=x\], then naturally \[f(t)=t\]. \[F(x)=\int_{a}^{x}f(t)\,dt=\left[ \frac{t^{2}}{2} \right]^{x}_{a}=\frac{x^{2}}{2}-\frac{a^{2}}{2}\], if we differentiate \[F(x)\], \[F^{\prime}(x)=\frac{d}{dx}\left( \frac{x^{2}}{2}-\frac{a^{2}}{2} \right)=x=f(x)\].

We'll assume the statement \[\int_{a}^{b}f(x)\,dx=F(b)-F(a)\] to be true.

To prove that \[F^{\prime}(x)=f(x)\], by the definition of derivatives:

Then, we'll show that \[F^{\prime}(x)=\lim_{h\to0}\frac{1}{h}\int_{x}^{x+h}f(t)\,dt=f(x)\]. First, consider the case where \[h>0\].

On the interval of \[\left[ x,x+h \right]\], \[f\] is proven to take on a minimum value \[m\] and a maximum value \[M\] by the extreme value theorem (as \[f\] is continuous and on a closed intervals). Since \[m\le f(t)\le M\] for every \[t\] in the interval \[\left[ x,x+h \right]\], we can take the definite integrals on this interval, giving us \[\int_{x}^{x+h}m\,dt\le\int_{x}^{x+h}f(t)\,dt\le\int_{x}^{x+h}M\,dt\].

Now, \[\int_{x}^{x+h}m\,dt=h\cdot m\] (basically just finds the area of a rectangle of width \[h\] and height \[m\]) and similarly \[\int_{x}^{x+h}M\,dt=h\cdot M\]. Our inequality can then be written as \[hm\le\int_{x}^{x+h}f(t)\,dt\le hM\], when simplified, \[m\le \frac{1}{h}\int_{x}^{x+h}f(t)\,dt\le M\].

Since \[f\] is continuous, as \[h\to0\], the values of \[f\] within the interval \[\left[ x,x+h \right]\] would also approach \[f(x)\]. The values that concern us in particular are \[m\] and \[M\], as they both will approach \[f(x)\] too.

By Squeeze theorem, if both \[m\] and \[M\] approach \[f(x)\], then it follows that \[\lim_{h\to0}\frac{1}{h}\int_{x}^{x+h}f(t)\,dt=f(x)\].

Therefore, we've shown that \[F^{\prime}(x)=f(x)\].

Proof of the corollary, \[\int_{a}^{b}f(t)\,dt=F(b)-F(a)\]

This proof is a weaker version of the second fundamental theorem of calculus, as it requires \[f\] to be continuous.

Suppose \[F\] is an antiderivative of \[f\], with \[f\] continuous on \[\left[ a,b \right]\]. Let \[G(x)=\int_{a}^{x}f(t)\,dt\]. By the first part, it's known that \[G(x)\] is an antiderivative of \[f\]. Since \[F^{\prime}(x)-G^{\prime}(x)=f(x)-f(x)=0\], this means that \[F(x)\] and \[G(x)\] are identical functions (they have the same slope across the interval \[\left( a,b \right)\]), and the only difference they might have is just a vertical shift.

Now to prove that, assume \[h(x)=F(x)-G(x)\], then \[h^{\prime}(x)=F^{\prime}(x)-G^{\prime}(x)=0\]. By the mean value theorem, if \[h\] is continuous on \[\left[ a,b \right]\] and differentiable on \[\left( a,b \right)\], then there exists a point \[c\in \left( a,b \right)\] such that \[h^{\prime}(c)=\frac{h(b)-h(a)}{b-a}\]. But since \[h^{\prime}(x)=0\] for every \[x\], it follows that for any \[c\], \[h^{\prime}(c)=0\], which leaves us with the only possibility that \[h(x)\] is a straight line of zero gradient.

This means that \[h(x)\] is a constant (e.g. \[h(x)=3\]), as only such functions can have a derivative that's zero across \[\left( a,b \right)\]. So, we can conclude that \[h(x)=F(x)-G(x)=\text{constant}\], which also implies that there is a constant such that \[G(x)=F(x)+\text{constant}\] for all \[x\] in \[\left[ a,b \right]\], proving that the difference between both functions is just a vertical shift.

To find the constant, we let \[x=a\], then \[F(a)+\text{constant}=G(a)=\int_{a}^{a}f(t)\,dt=0\], thus the constant must be \[-F(a)\].

In other words, \[G(x)=F(x)-F(a)\], and so \[\int_{a}^{b}f(t)\,dt=G(b)=F(b)-F(a)\].