matrix multiplication

Matrix multiplication

Intuition and definition

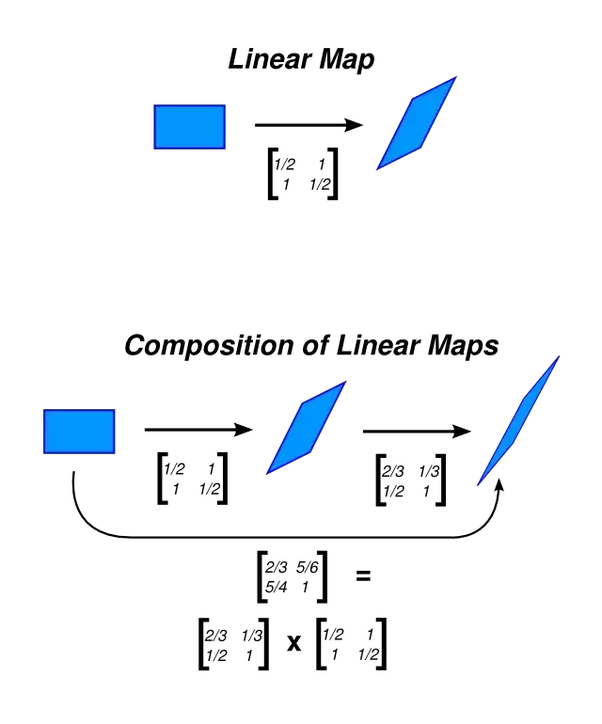

Matrix multiplication is a symbolic way of substituting one linear change of variables into another one, or in a more familiar notation, the composition of two linear functions, e.g. \[g(f(x))\].

Let \[x^{\prime}=ax+by\] and \[y^{\prime}=cx+dy\], \[x^{\prime\prime}=a^{\prime}x^{\prime}+b^{\prime}y^{\prime}\] and \[y^{\prime\prime}=c^{\prime}x^{\prime}+d^{\prime}y^{\prime}\]. To express \[x^{\prime\prime}\] and \[y^{\prime\prime}\] in terms of \[x\] and \[y\]:

It is very tedious to write these variables, thus we use arrays to track these coefficients. What is just written coincides with the matrix product:

So matrix multiplication is just a bookkeeping device for systems of linear substitutions plugged into one another. The formula itself isn't quite intuitive as it is just a simple idea of combining two linear changes of variables in succession.

The notation above is a little convoluted, so let's just take a simple example, \[u=3x+7y,v=-2x+11y\] and \[p=13u-20v,q=2u+6v\]. Representing \[p\] and \[q\] in terms of \[x\] and \[y\] gives us \[p=79x-129y,q=-6x+80y\], which coincides with,

Now you may be wondering, what's the need of this? A simple answer is that it allows us to represent linear transformations in a natural way, which implies that the resulting matrix from multiplication tells us the mapping if you apply one transformation followed by the other.

To extend on the image above, we'll demonstrate it with functions. Let \[f:\mathbb{R}^{2}\to\mathbb{R}^{3}\] and \[g:\mathbb{R}^{3}\to \mathbb{R}^{4}\]. \[f(\begin{pmatrix} x\\y \end{pmatrix})=\begin{pmatrix} x+2y\\x-y\\x \end{pmatrix}\] and \[g(\begin{pmatrix} a\\b\\c \end{pmatrix})=\begin{pmatrix} a+b+c\\c\\0\\a \end{pmatrix}\].

With the way we defined both functions, it only makes sense to do \[g(f(x))\] as opposed to \[f(g(x))\]. If we were to do \[f(g(x))\], it would mean mapping a vector from dimension 4 to 3, but \[f(x)\] requires something representable in \[\mathbb{R}^{2}\]. This also explains why the condition (as defined later on) \[n_{1}=m_{2}\] is a requirement for matrix multiplication.

If we expand \[g(f(x))\],

We can also rewrite \[\begin{pmatrix} x+2y\\x-y\\x \end{pmatrix}=\begin{pmatrix} 1&2\\1&-1\\1&0 \end{pmatrix}\cdot\begin{pmatrix} x\\y \end{pmatrix}=A\cdot\mathbf{x}\] and \[\begin{pmatrix} a+b+c\\c\\0\\a \end{pmatrix}=\begin{pmatrix} 1&1&1\\0&0&1\\0&0&0\\1&0&0 \end{pmatrix}\cdot\begin{pmatrix} a\\b\\c \end{pmatrix}=B\cdot\mathbf{x}\]. Thus, \[\begin{pmatrix} 3x+y\\x\\0\\x+2y \end{pmatrix}=\begin{pmatrix} 3&1\\1&0\\0&0\\1&2 \end{pmatrix}\cdot\begin{pmatrix} x\\y \end{pmatrix}=C\cdot\mathbf{x}\].

Composing \[g(f(\mathbf{x}))\] once more we get \[g(f(\mathbf{x}))=g(A\mathbf{x})=B(A\mathbf{x})=C\mathbf{x}\], which brings us back to matrix multiplication \[BA\mathbf{x}=C\mathbf{x}\].

Scalar

Assume \[A\] is a \[2\times2\] matrix.

Matrices

Assume two matrices of order \[m_{1}\times n_{1}\] and \[m_{2}\times n_{2}\]. For the two matrices to be able to be multiplied together, the condition \[n_{1}=m_{2}\] must be true, as explained above, e.g.

For multiplications of three or more matrices, say \[A\], \[B\], \[C\], \[D\], as long as they are multiplied in sequence, such as \[(AB)CD\] or \[A(BC)D\], the answer will be the same.

Let \[A=(a_{ij})\] be a \[m\times n\] matrix and \[B=(b_{ij})\] be a \[n\times p\] matrix. The matrix product \[AB\] is defined to be a \[m\times p\] whose entry \[i,j\] entry is \[\sum_{k=1}^{n}(a_{ik}\cdot b_{kj})\]. For example, the entry at the first row and second column of the matrix product \[AB\] the formula would be a shorthand for \[a_{11}b_{12}+a_{12}b_{22}+\cdots+a_{1n}b_{n2}\].

Non-commutativity

An operation is defined as commutative if given two elements \[A\] and \[B\] such that the product \[AB\] is defined, then \[BA\] is also defined and \[AB=BA\]. For matrix multiplication, \[AB\ne BA\] therefore it is non-commutative. To demonstrate this, \[\begin{pmatrix} 0&1\\0&0 \end{pmatrix}\begin{pmatrix} 0&0\\1&0 \end{pmatrix}=\begin{pmatrix} 1&0\\0&0 \end{pmatrix}\] but \[\begin{pmatrix} 0&0\\1&0 \end{pmatrix}\begin{pmatrix} 0&1\\0&0 \end{pmatrix}=\begin{pmatrix} 0&0\\0&1 \end{pmatrix}\].

-

Zero matrix:

We define a zero matrix as

\begin{align*} 0_{mn}= \begin{pmatrix} 0 & 0 & \cdots \\ 0 & 0 & \cdots \\ \vdots & \vdots & \ddots \\ \end{pmatrix} \end{align*}

Any matrix multiplied by zero, is zero, therefore \[AB=BA\]

-

Powers

Assume:

\begin{align*} A= \begin{pmatrix} 2 & 5 \\ 1 & 2 \\ \end{pmatrix},\, A^{2}= \begin{pmatrix} 9 & 20 \\ 4 & 9 \\ \end{pmatrix} \end{align*}

Here, \[AA^{2}=A^{2}A\], or in general, \[A^{m}A^{n}=A^{n}A^{m}\].

-

Identity matrix

When \[A\] is an \[m\times n\] matrix, then \[I_{m}A=AI_{n}=A\].

Matrix multiplication happens columnwise

When we multiple a \[m\times n\] matrix by an \[n\times 1\] column vector. Let \[A=\begin{pmatrix} a&b&c\\d&e&f \end{pmatrix}\], \[\mathbf{x}=\begin{pmatrix} x\\y\\z \end{pmatrix}\]. Then, \[A\mathbf{x}=\begin{pmatrix} ax+by+cz\\dx+ey+fz \end{pmatrix}\], or another way to write the result of the multiplication would be \[x\begin{pmatrix} a\\d \end{pmatrix}+y\begin{pmatrix} b\\e \end{pmatrix}+z\begin{pmatrix} c\\f \end{pmatrix}\]. If we denote the \[j\]th column of \[A\] as \[\mathbf{c}_{j}\] then the expression is simplified into \[x\mathbf{c}_{1}+y\mathbf{c}_{2}+z\mathbf{c}_{3}\], which is known as a linear combination of \[\mathbf{c}_{1},\mathbf{c}_{2},\mathbf{c}_{3}\].